How to improve the quality of scientific research

An international team of experts has produced a “manifesto” setting forth steps to improve the quality of scientific research.

John Ioannidis is the senior author of the article, which was published in the inaugural issue of Nature Human Behavior. He is the Director of the Prevention Research Center at Stanford University and holds the university’s C.F. Rehnborg Chair in Disease Prevention. Moreover, he’s Professor of Statistics at Stanford University School of Humanities and Sciences, one of the two Directors of the Meta-Research Innovation Center at Stanford, and a member of Stanford Cancer Institute and Stanford Cardiovascular Institute.

“There is a way to perform good, reliable, credible, reproducible, trustworthy, useful science. We have ways to improve compared with what we’re doing currently, and there are lots of scientists and other stakeholders who are interested in doing this,” said Ioannidis,

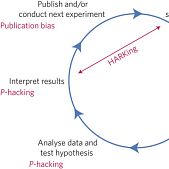

Each year, the U.S. government spends nearly $70 billion on nondefense research and development, including a budget of more than $30 billion for the National Institutes of Health. Yet research on how science is conducted—so-called meta-research—has made clear that a substantial number of published scientific papers fail to move science forward. One analysis, wrote the authors, estimated that as much as 85 percent of the biomedical research effort is wasted. One reason for this is that scientists often find patterns in noisy data, the way we see whales or faces in the shapes of clouds. This effect is more likely when researchers apply hundreds or even thousands of different analyses to the same data set until statistically significant effects appear.

The manifesto suggests it’s not just scientists themselves who are responsible for improving the quality of science, but also other stakeholders, including research institutions, scientific journals, funders and regulatory agencies. All, said Ioannidis, have important roles to play.

“It’s a multiplicative effect,” he said, “so you have all of these players working together in the same direction.” If any one of the stakeholders doesn’t participate in creating incentives for transparency and reproducibility, he said, it makes it harder for everyone else to improve. “Most of the changes that we propose in the manifesto are interrelated, and the stakeholders are connected as if by rubber bands. If you have one of them move, he or she may pull the others. At the same time, he or she may be restricted because others don’t move,” said Ioannidis, who is also co-director of the Meta-Research Innovation Center at Stanford.

The eight-page paper describing ways to improve science includes four major categories: methods, reporting and dissemination, reproducibility, and evaluation and incentives.

Methods could be improved, the authors reported, by designing studies to minimize bias—by blinding patients, doctors and other participants, and by registering the study design, outcome measures and analysis plan before the research begins—to prevent subsequent deviations from the study design, regardless of intriguing, serendipitous results. The authors also state that reporting and dissemination might be improved by eliminating “the file drawer problem,” the tendency of researchers to publish results that are novel, statistically significant or supportive of a particular hypothesis, while not publishing other valid but less interesting results. “The consequence,” wrote the authors, “is that the published literature indicates stronger evidence for findings than exists in reality.”

The file drawer effect is fueled, though, from the behavior of universities, journals, reviewers and funding agencies—not just that of individual scientists, the authors write. One way funders and journals can help is by requiring all researchers to meet certain standards. For example, the Cure Huntington Disease Initiative has created an independent standing committee to evaluate proposals and provide disinterested advice to grantees on experimental design and statistical analysis. This committee doesn’t just set standards; it actually helps researchers meet those standards.

The ultimate goal is to get to the truth, Ioannidis said. “When we are doing science, we are trying to arrive at the truth. In many disciplines, we want that truth to translate into something that works. But if it’s not true, it’s not going to speed up computer software, it’s not going to save lives and it’s not going to improve quality of life.”

He said the goal of the manifesto is to increase the speed at which researchers get closer to the truth. “All these measures are intended to expedite the process of validation—the circle of generating, testing and validating or refuting hypotheses in the scientific machine.”

Read also:

John Ioannidis: In search of discontinuity in scientific research

31 Greek researchers among the world’s most influential scientific minds

The Greeks in the list with the world’s most influential scientific minds 2015